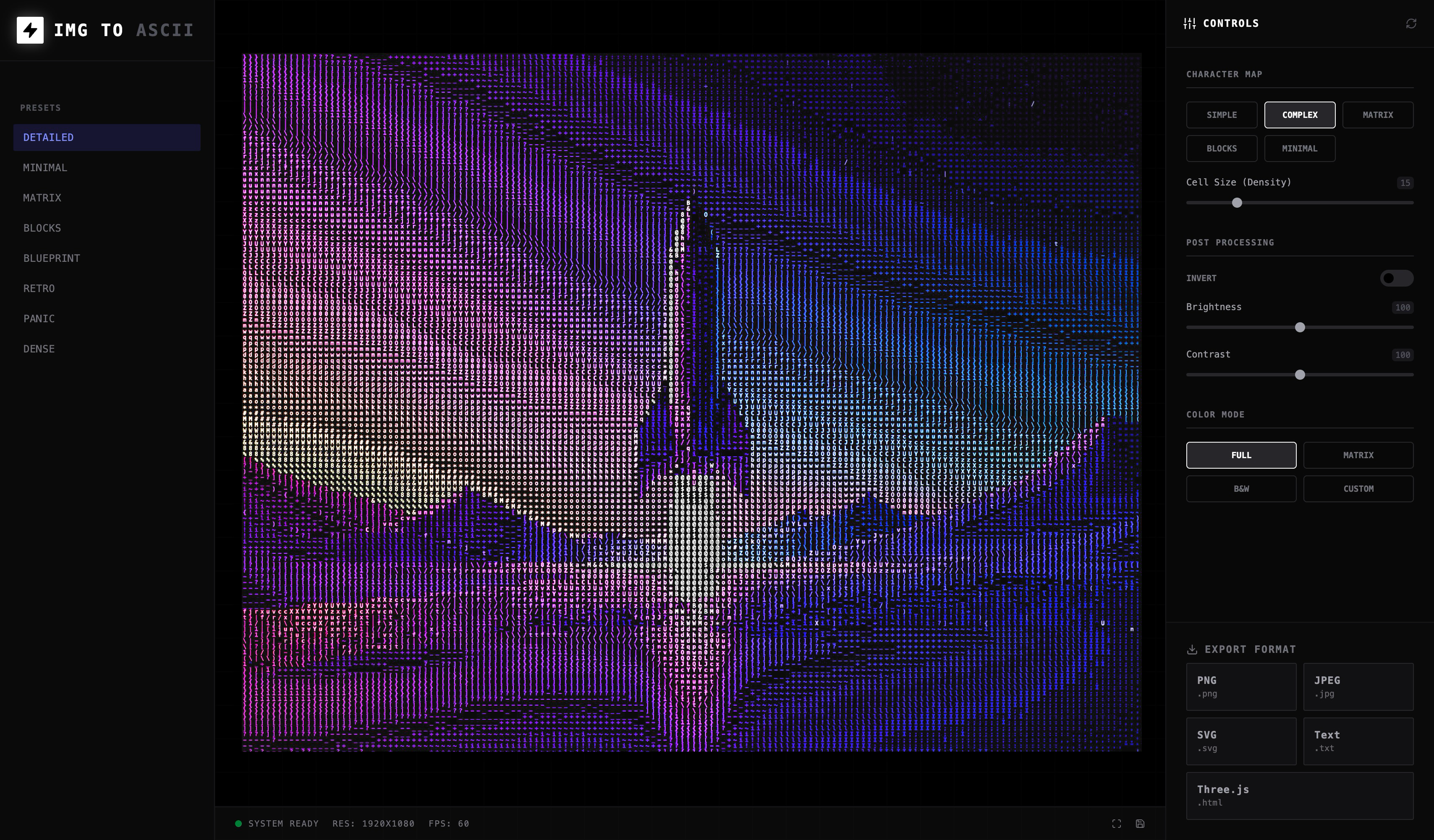

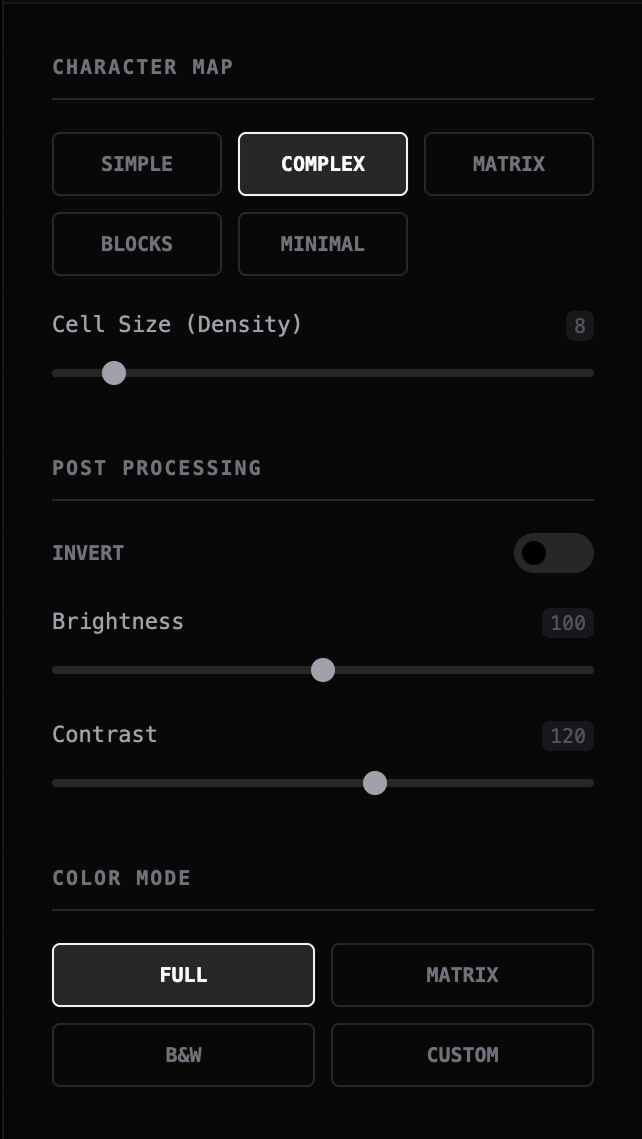

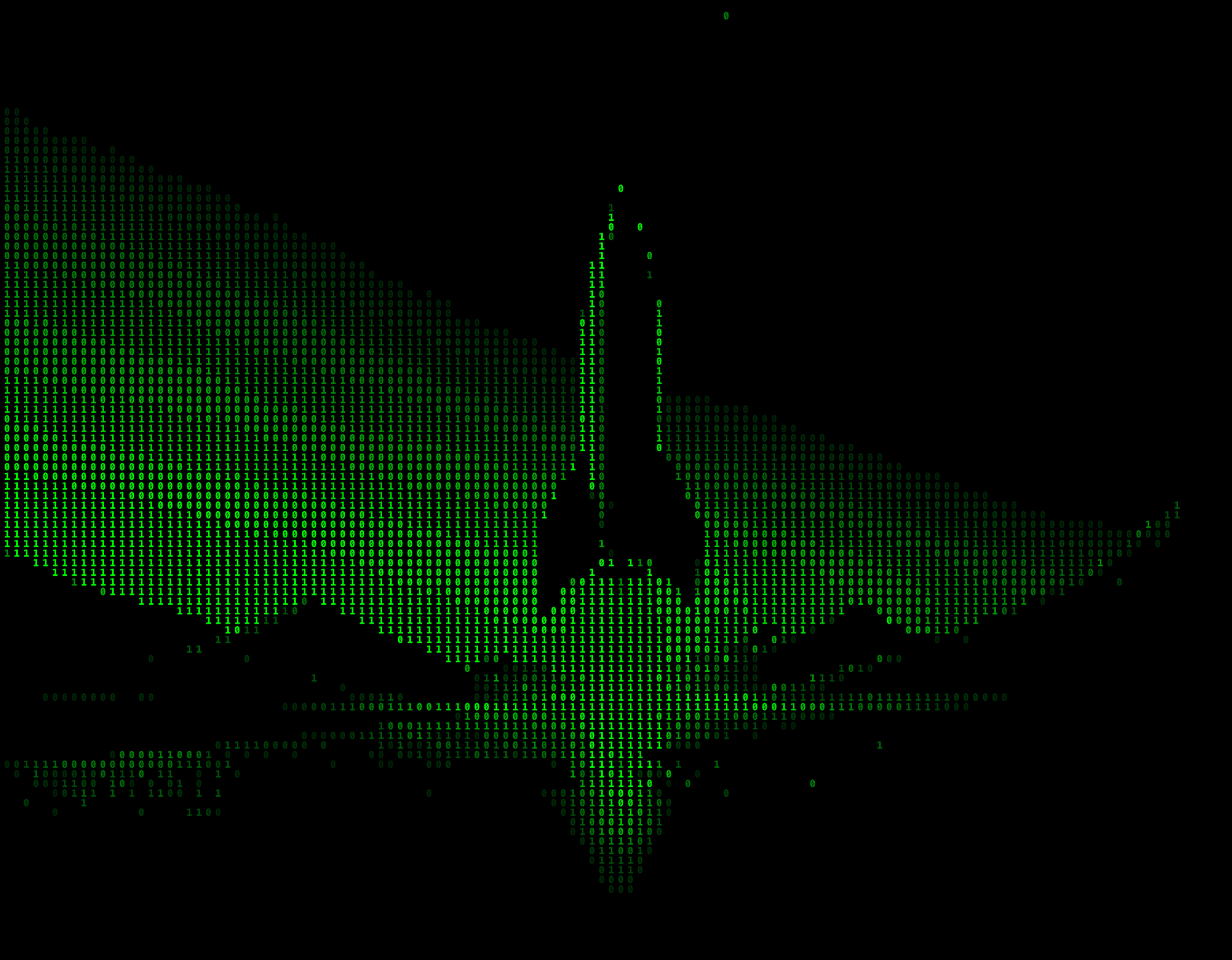

There is a recurring gap in creative tooling: Photoshop-level tools are powerful but heavy for rapid exploration, code-based visual tools are flexible but inaccessible to most designers, and experimental generators produce interesting outputs with weak control and poor export paths.

That leaves a practical unmet need: a lightweight tool that helps visual designers, art directors, and digital creators turn ordinary images into stylized assets quickly, with enough control to feel intentional rather than random.