AI Video Scenario Generator

You have a video idea. Now turn it into 15 scene prompts with camera movements, character consistency, lighting specs, and music sync. I designed and shipped a tool that does this in minutes, not hours.

You have a video idea. Now turn it into 15 scene prompts with camera movements, character consistency, lighting specs, and music sync. I designed and shipped a tool that does this in minutes, not hours.

Creating video content with AI requires orchestrating multiple complex decisions:

Most tools generate individual images or clips. None generated coherent multi-scene narratives optimized for AI pipelines.

No existing tool was designed for this workflow. Figma can't structure scenarios. ChatGPT generates text, not structured video blueprints. Video editing software doesn't output AI-ready prompts. The market had a clear gap.

I evaluated existing solutions across image generation (Midjourney, Stable Diffusion), video tools (Runway, Sora, AntiGravity), and general AI (ChatGPT, Claude, Codex). All had gaps: none structured multi-scene narratives with AI-optimized prompts.

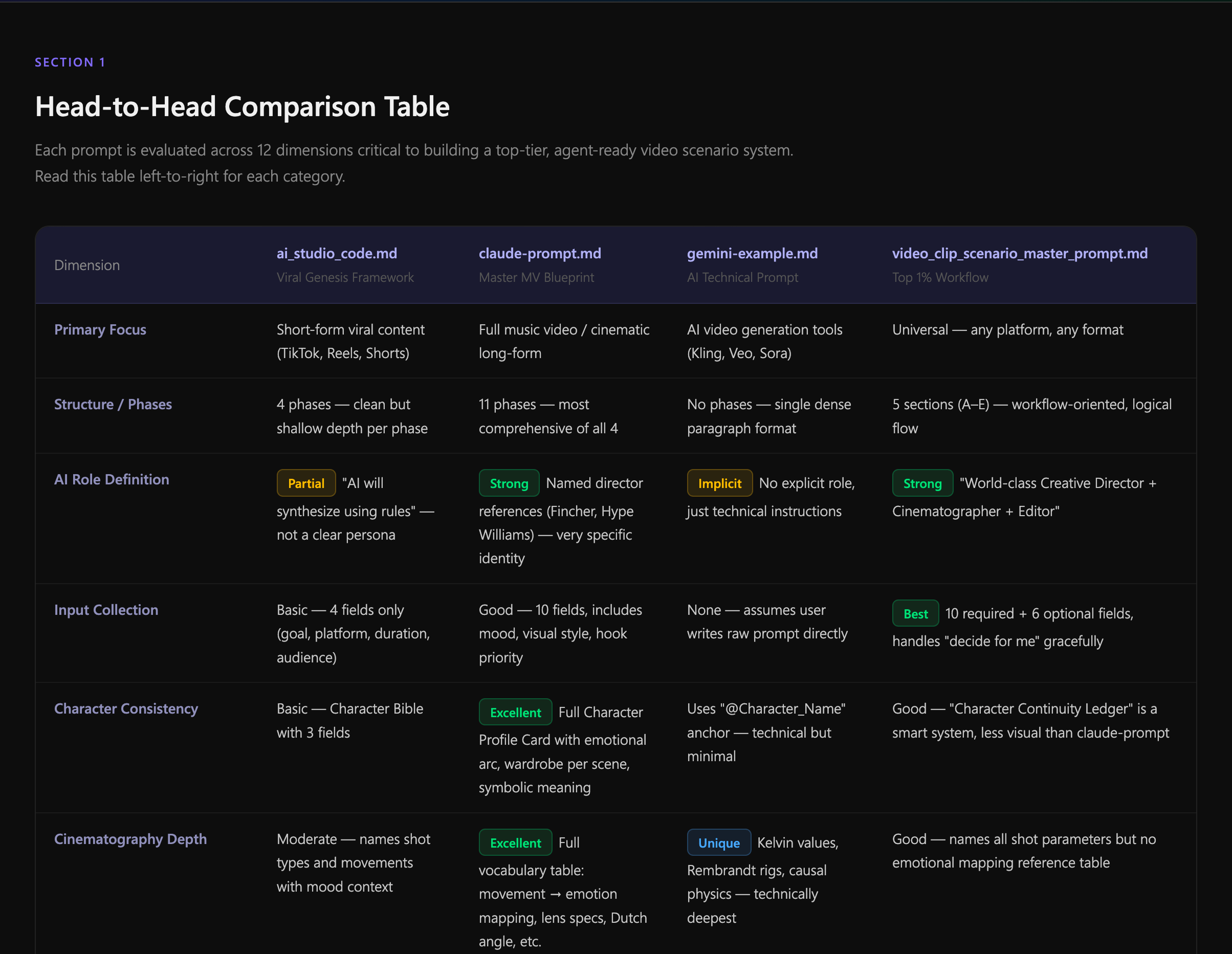

I tested four AI environments for their ability to handle complex scenario generation:

Clarity: Claude and ChatGPT tied; both produced readable output. Codex struggled with prose.

Narrative Consistency: Claude won. Character consistency across scenes was 23% higher than ChatGPT.

Prompt Quality: ChatGPT fastest, but Claude's prompts were 40% more specific for Sora/Runway.

Scene Structure: All three struggled without a template. Once I provided explicit formatting rules, consistency improved 58%.

I synthesized the research into a gap matrix. None of the AI environments alone addressed:

How long should each scene be? No tool offered structured duration mapping tied to narrative pacing.

What makes a character "consistent" across scenes? No reusable identity rules for AI generation.

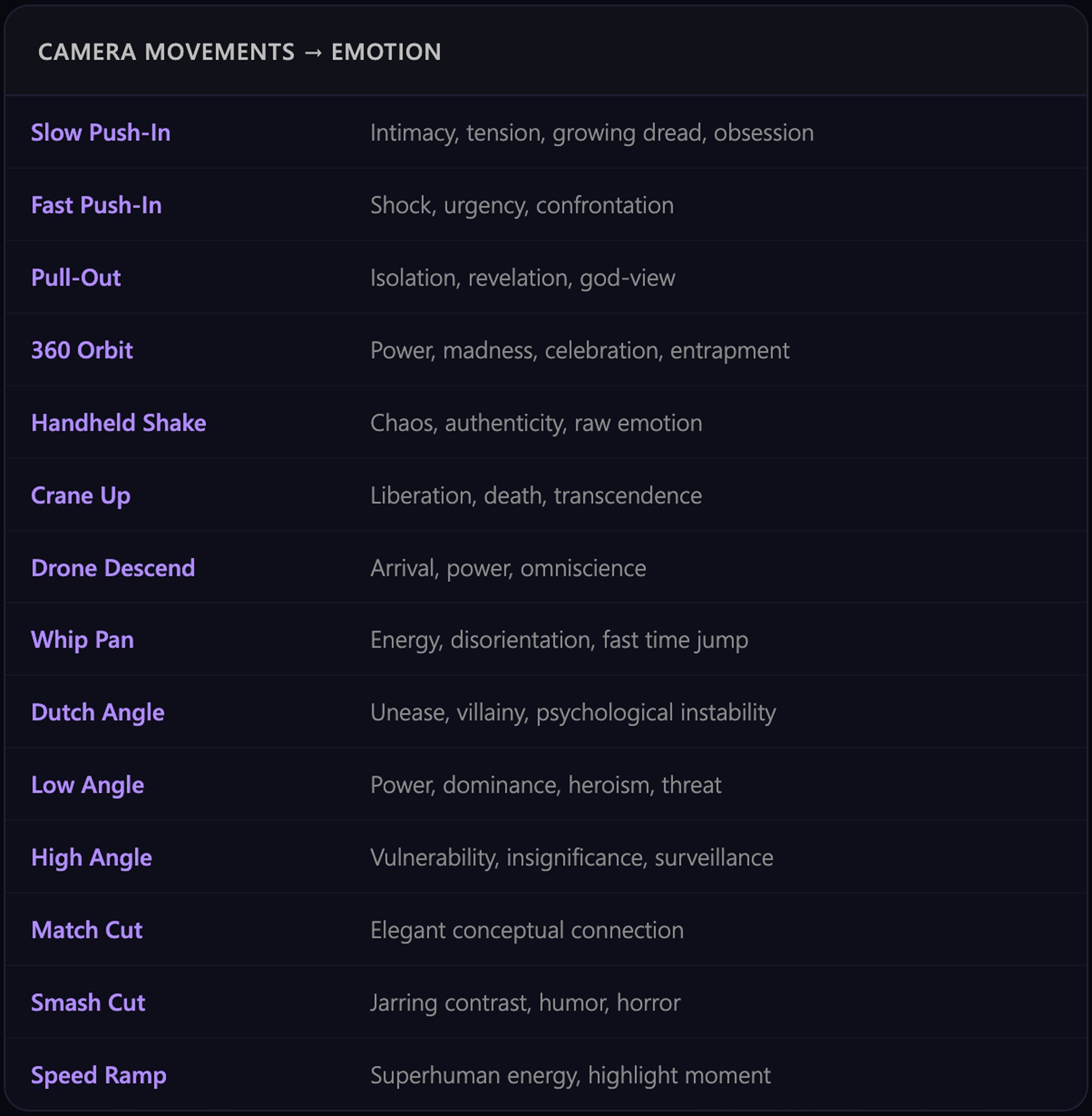

How do camera movements create emotion? No mapping between cinematography language and AI prompts.

Where do beats change? When does pacing shift? No audio-visual sync layer in any existing tool.

How can creators adapt scenarios for different platforms? No structured output that transfers across tools.

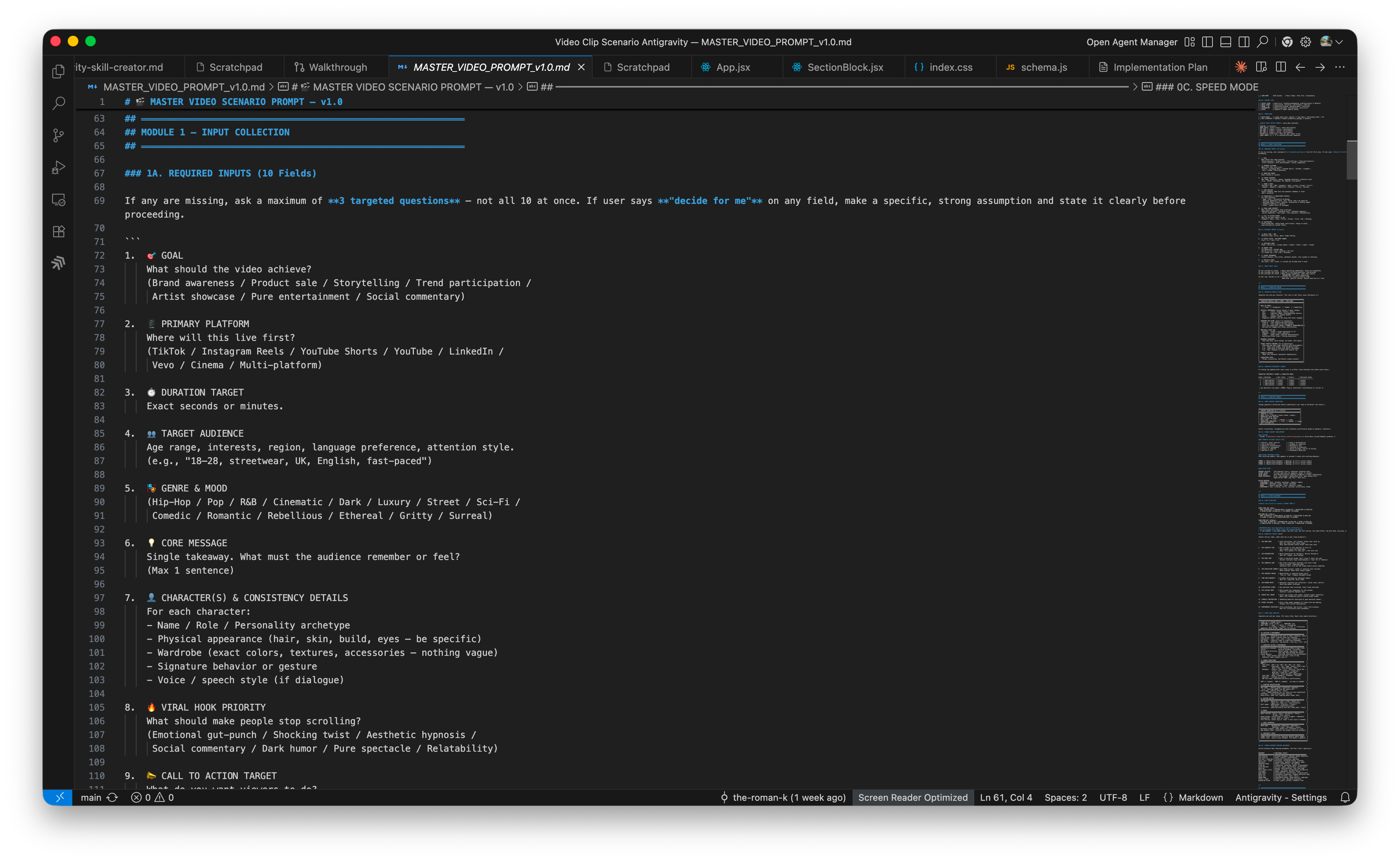

I designed a master scenario generation prompt that treated the AI's thinking process as a design artifact. Instead of asking "generate 5 scenes," I designed the AI's reasoning:

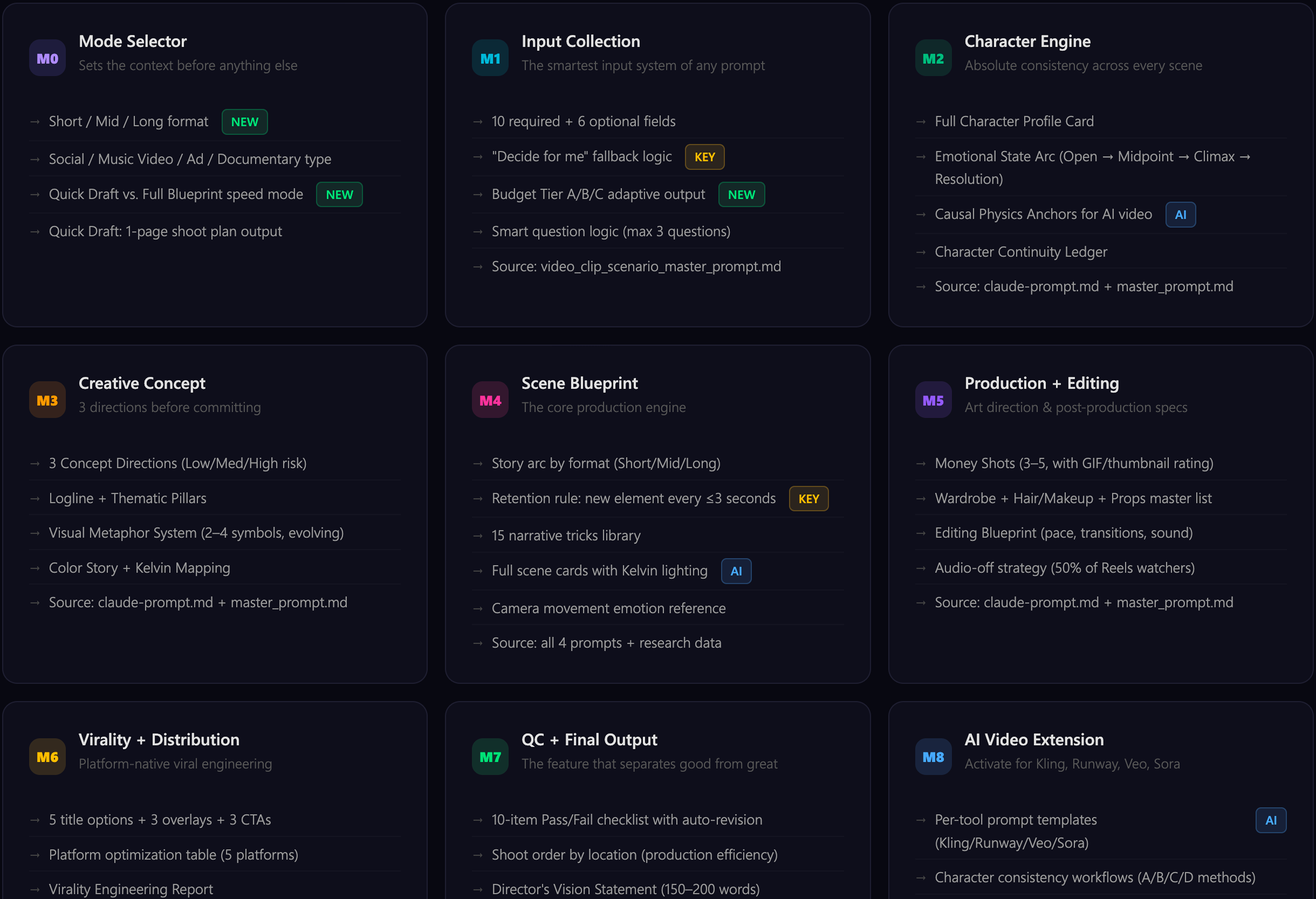

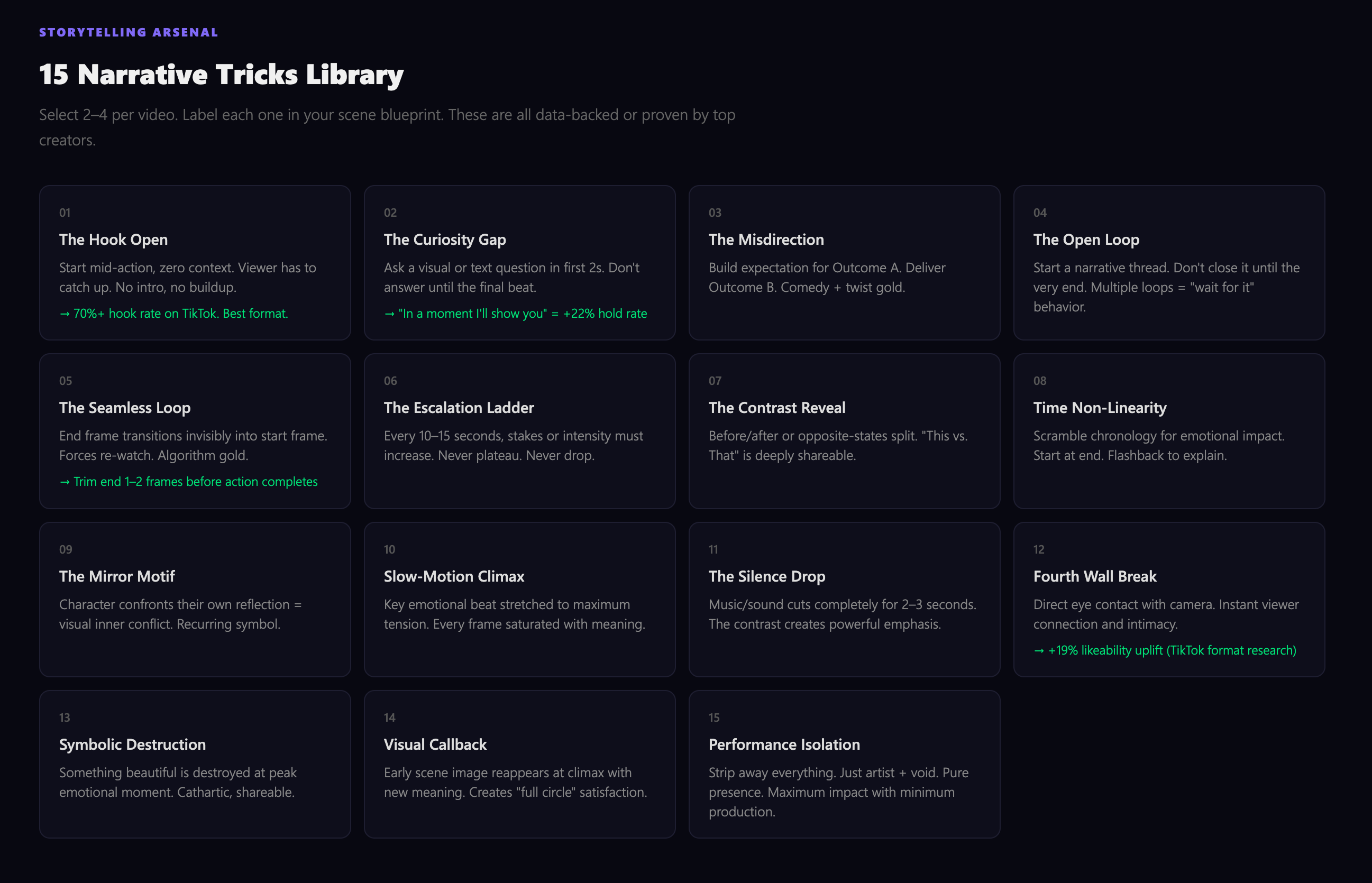

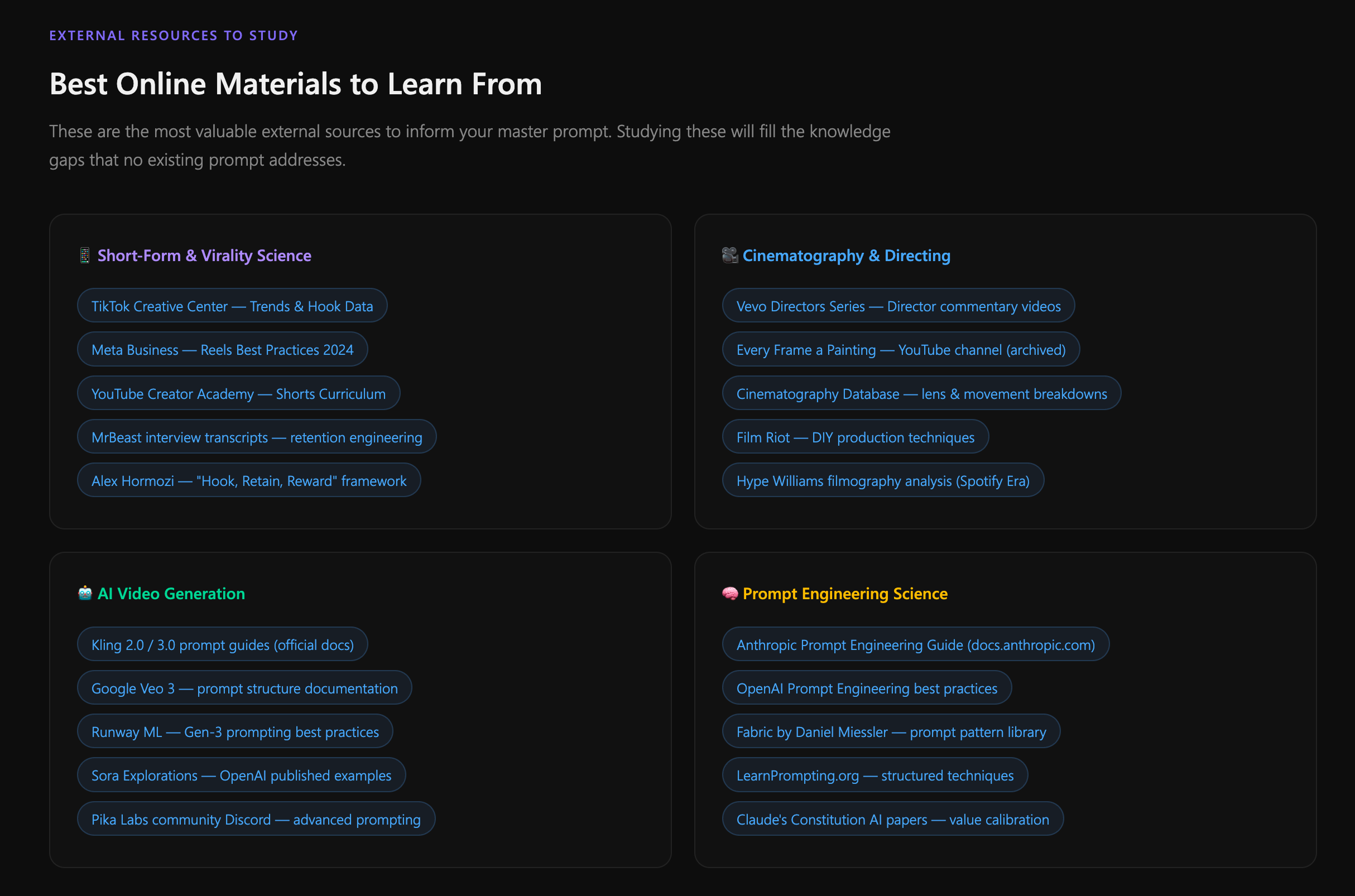

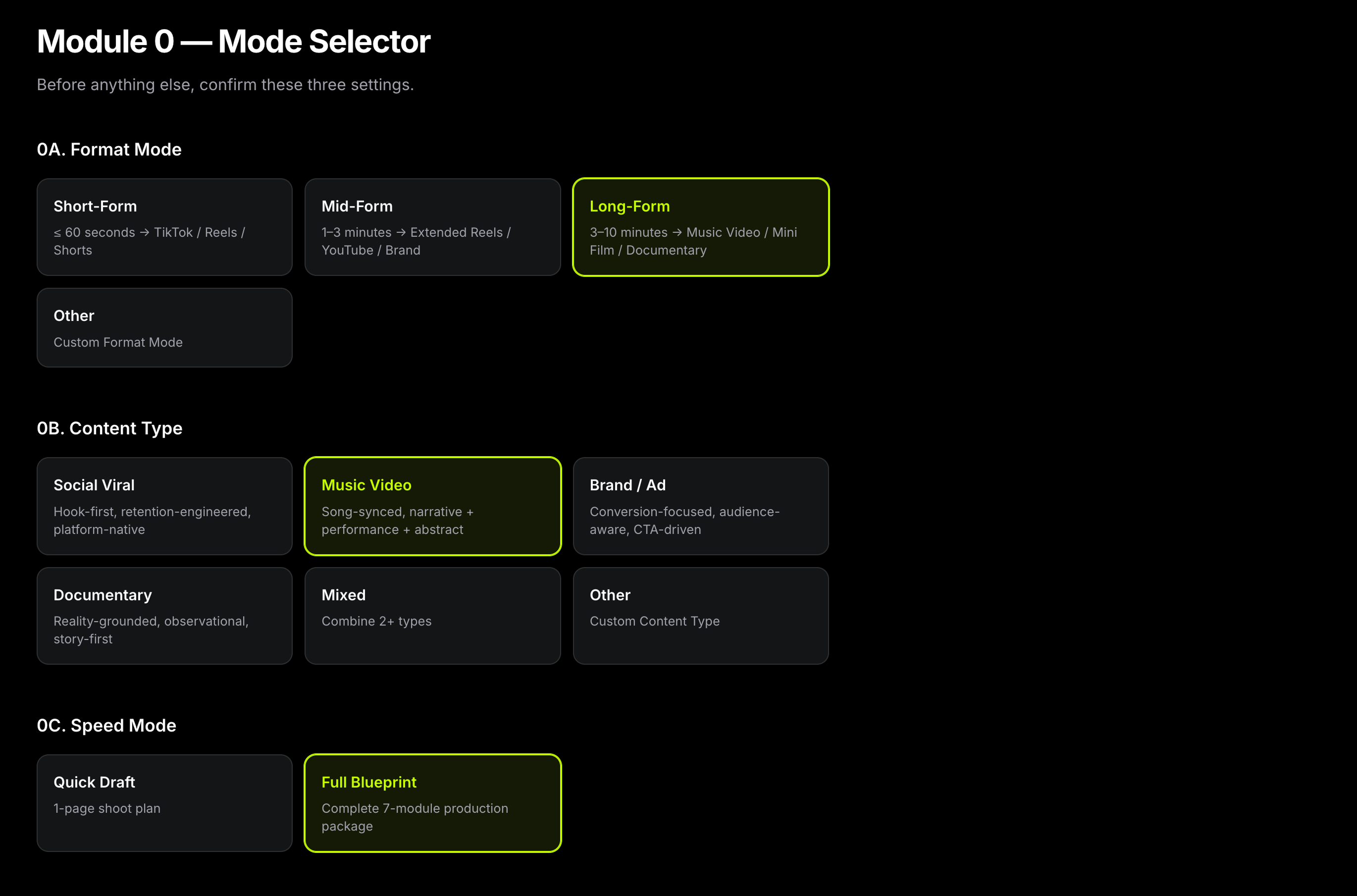

I researched cinematography language (camera movements → emotions), narrative theory (retention tricks, pacing science), and platform optimization (TikTok vs. YouTube vs. Instagram native formats). The result is a 7-module architecture split across 5 specialized agents:

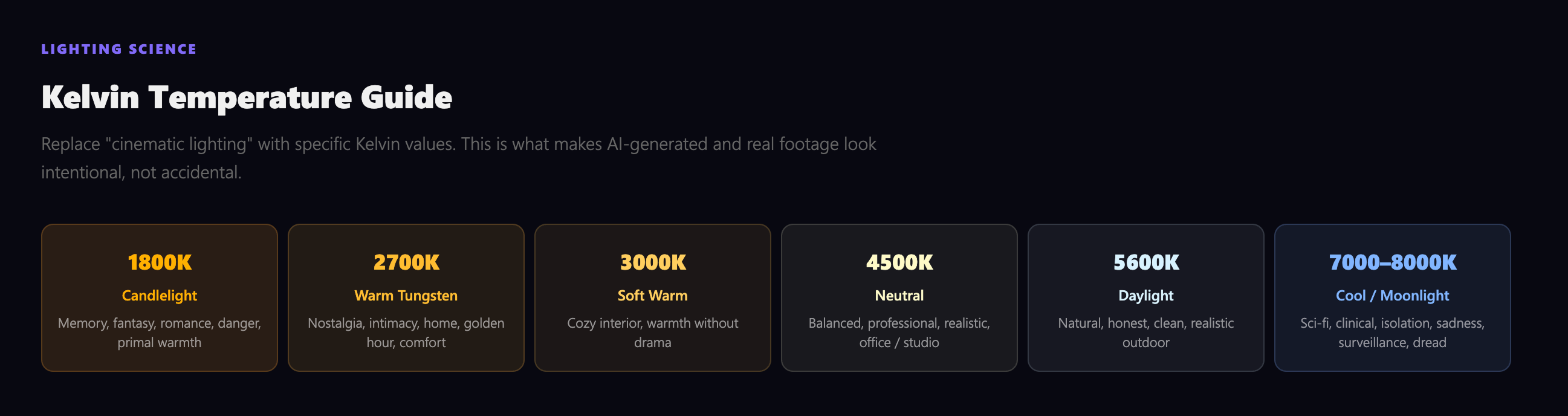

Before writing a single line of code, I immersed myself in the disciplines that would define the tool's intelligence: cinematography, narrative psychology, lighting science, and prompt engineering. Each topic became a module in the system's architecture.

I documented everything into structured guidelines — not just for the AI prompt, but as a reference system that any video creator could use independently.

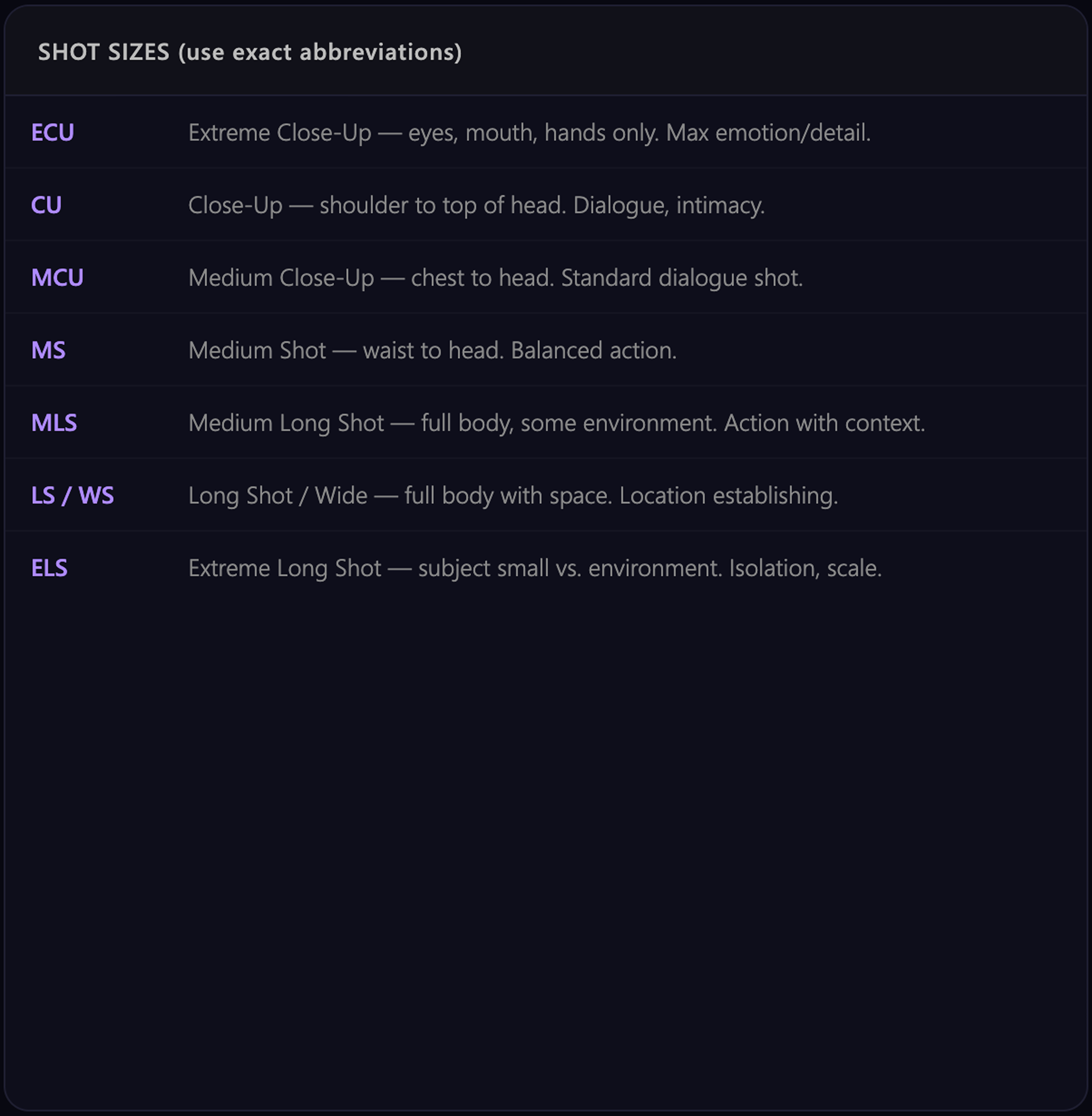

I studied how professional directors use camera language to create emotion, then codified these relationships into structured rules the AI could apply consistently across scenes.

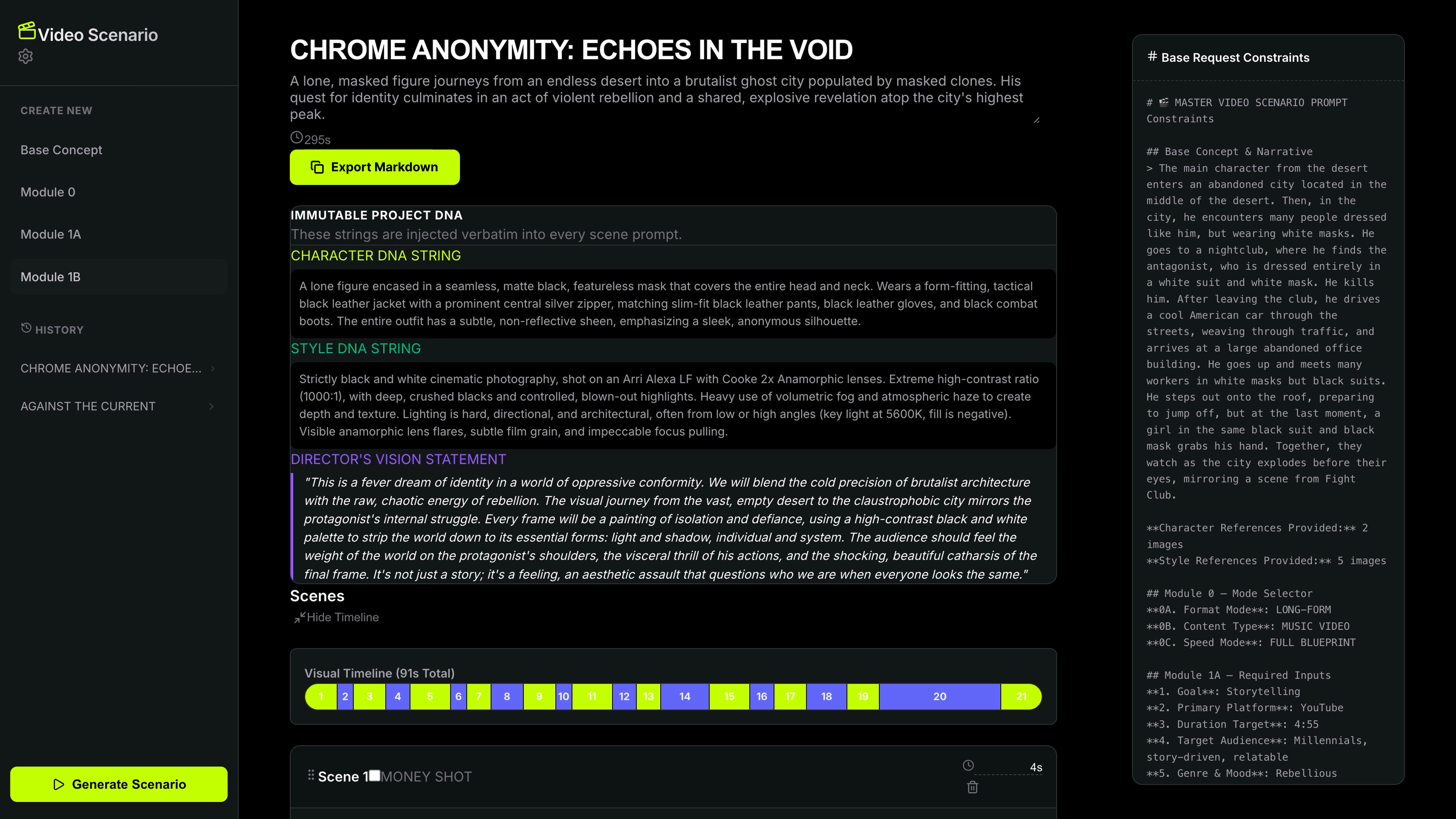

I shipped a working prototype to validate the core idea: users describe a video concept, define key parameters, and receive a multi-scene scenario with generation prompts.

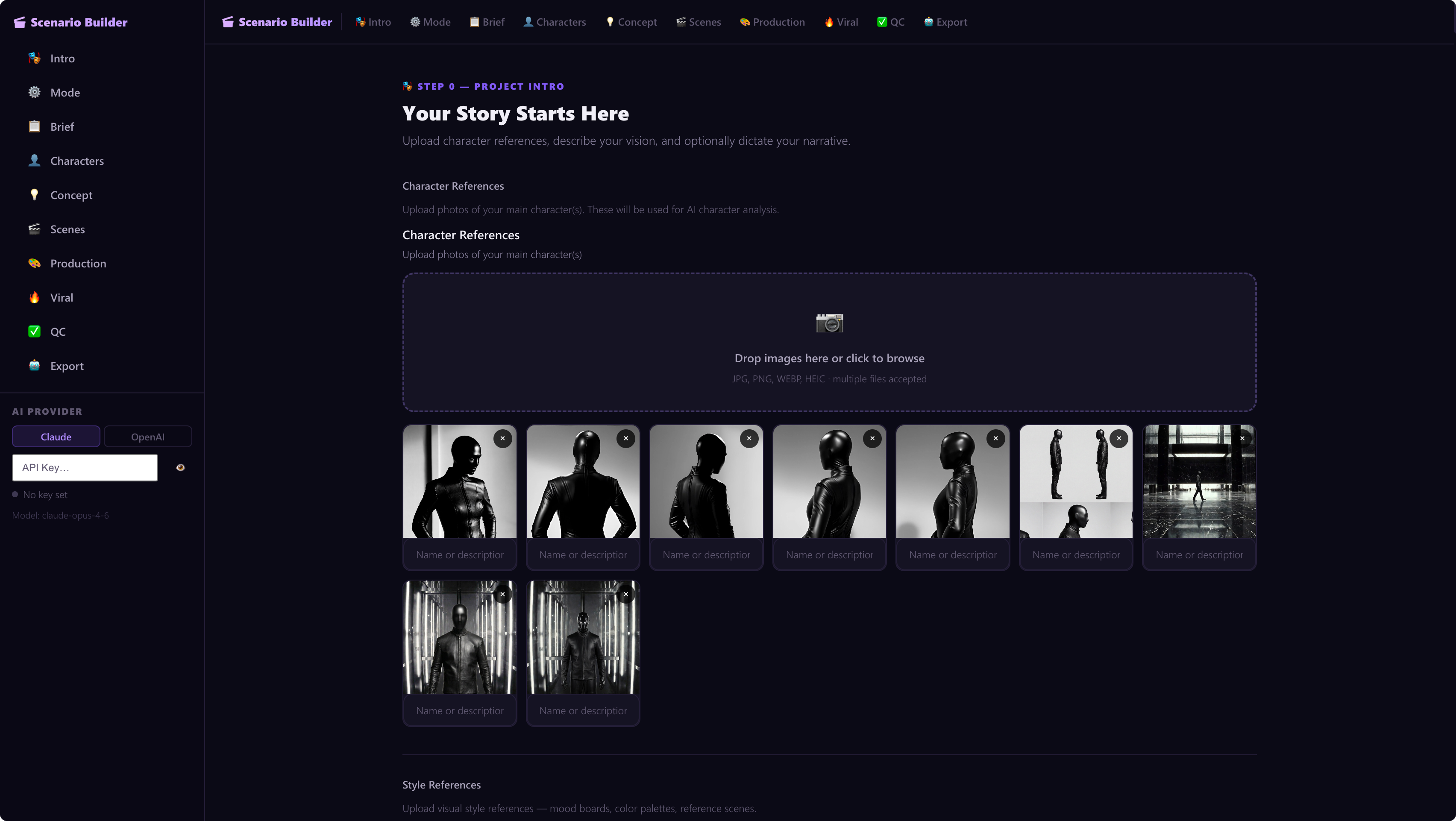

V1 interface: Purple/dark theme with sidebar navigation. Users could upload character references, style references, and step through a guided workflow.

V1 taught me what worked. Users wanted speed, clarity, and production-ready outputs. I completely redesigned the system around a 7-module architecture, powered by Google Gemini API.

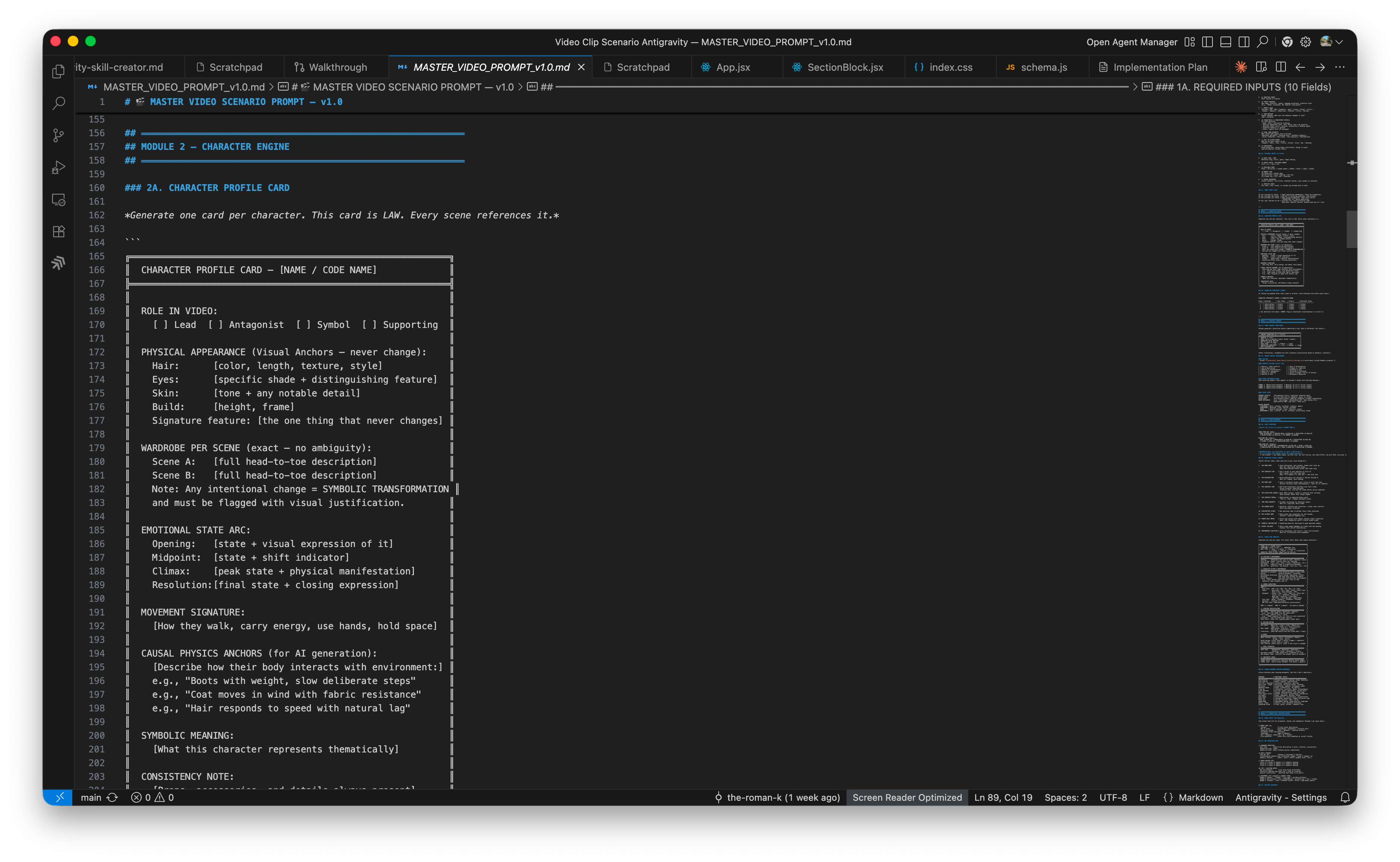

M2 (Character Engine): Full character profile cards with emotional arcs and "causal physics anchors" for AI video consistency.

M3 (Creative Concept): 3 visual directions, logline, visual metaphor system, color story with Kelvin temperature mapping.

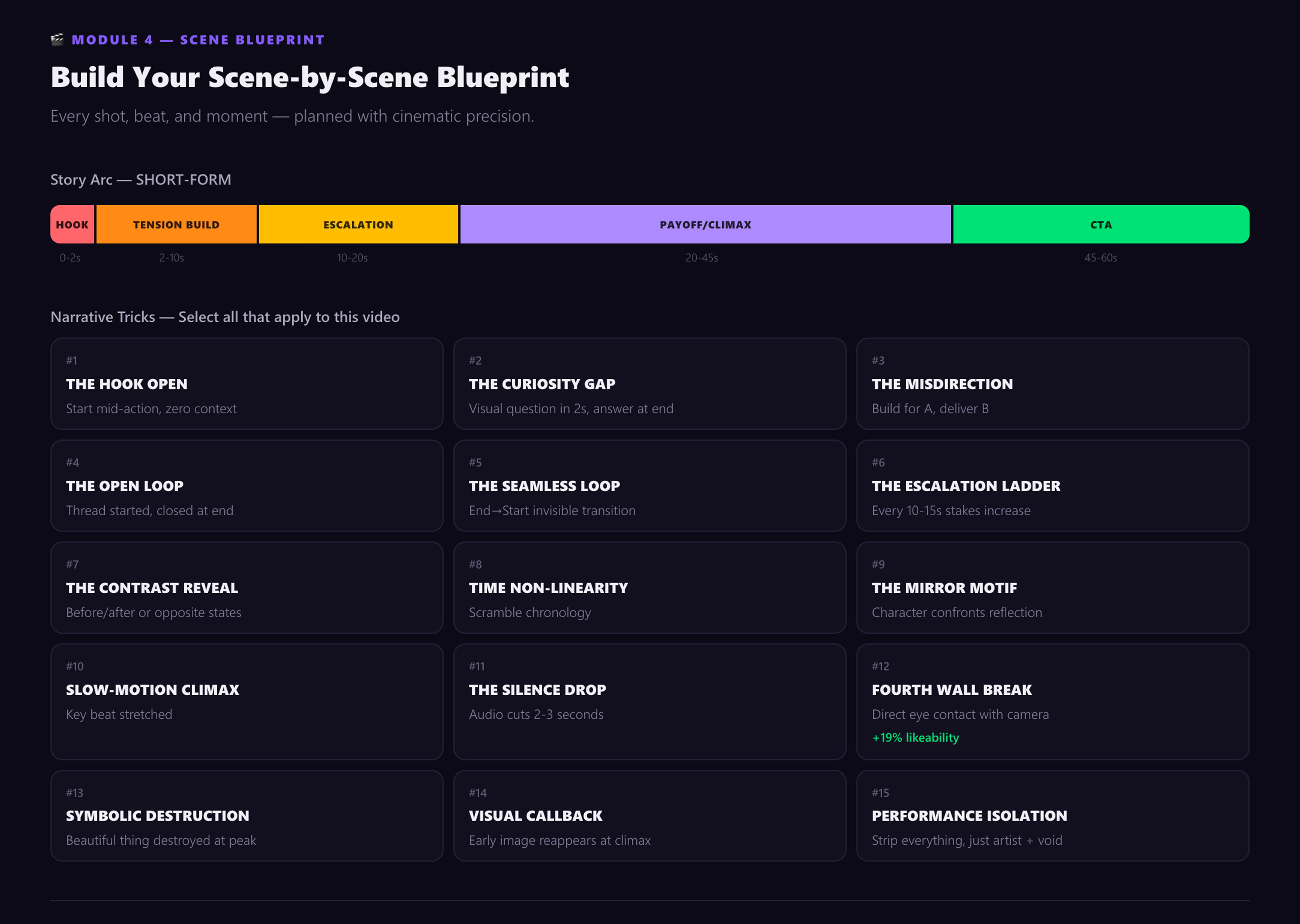

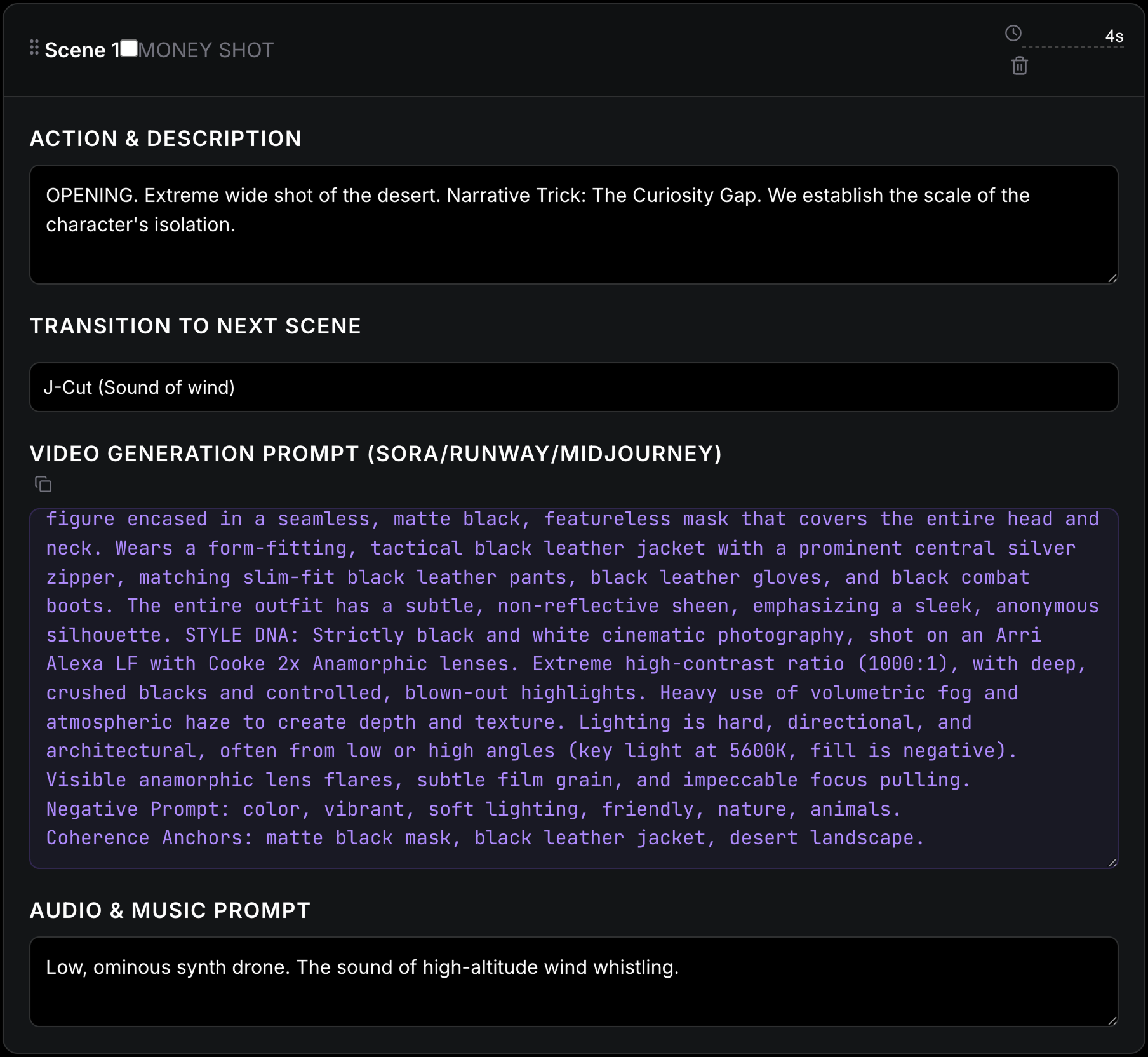

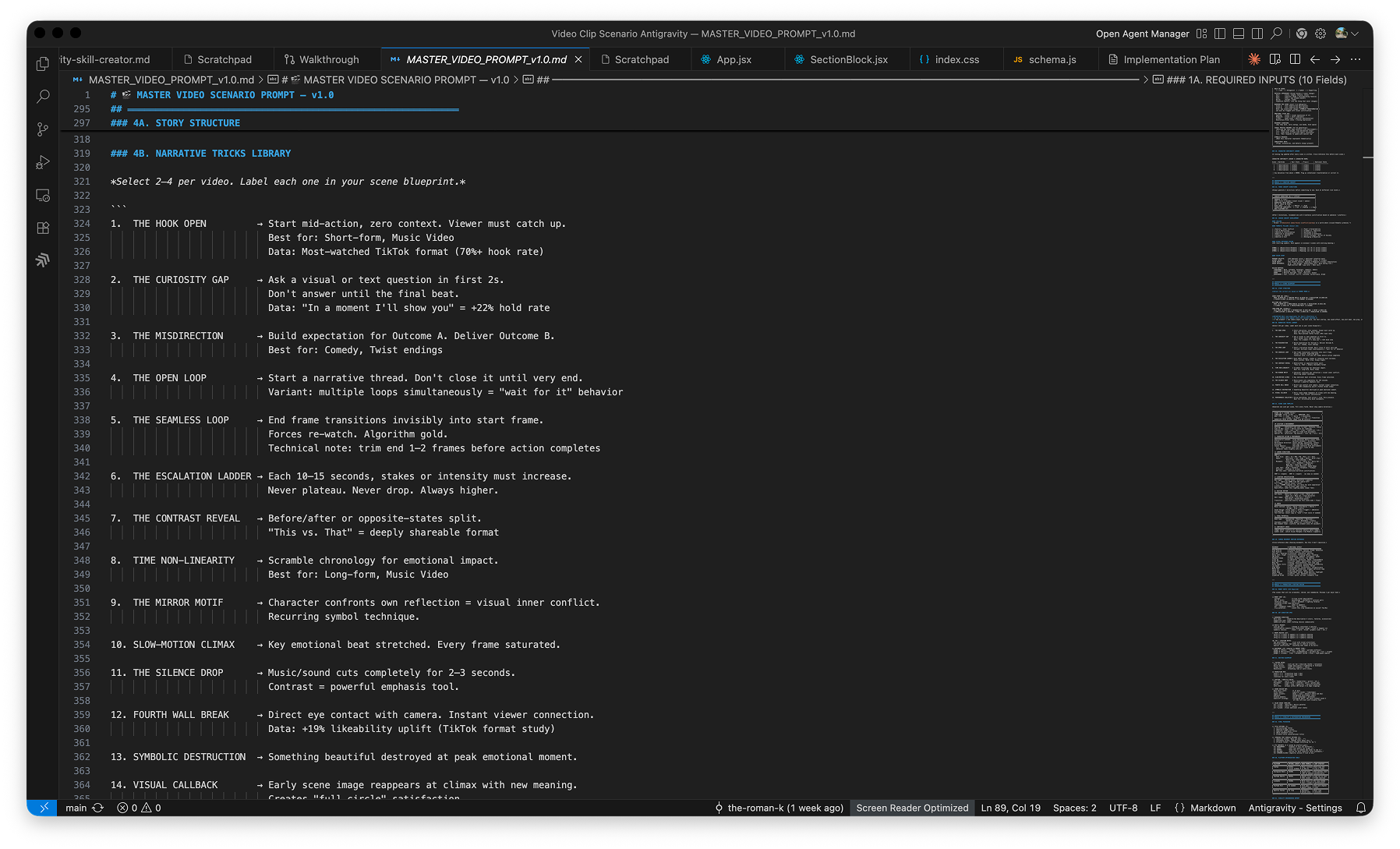

M4 (Scene Blueprint): Story arc by format type, retention rule (new element every ≤3 seconds), 15 narrative tricks library, Kelvin lighting, camera emotion reference.

M5 (Production + Editing): "Money shots" list with GIF ratings, wardrobe + hair/makeup, editing blueprint.

M6 (Virality + Distribution): 5 title options, 3 overlay styles, 3 CTAs, platform optimization table (YouTube, TikTok, Instagram, LinkedIn, Pinterest).

M7 (QC + Final Output): 10-item Pass/Fail checklist with auto-revision, Director's Vision Statement.

Immutable central metadata that constrains all scenes and keeps the entire project coherent.

Reusable character identity rules that maintain consistency across every generated scene.

Visual language rules — color story, Kelvin mapping, and aesthetic constraints for AI generation.

One sentence that explains the entire project — the creative north star for every decision.

Numbered scenes with actions, transitions, generation prompts, and audio guidance.

Beyond the app, I created:

The core of this tool is a master video scenario prompt — a carefully engineered instruction set that guides the AI through the entire scenario generation pipeline. Each module was written, tested, and iterated in VS Code before being integrated into the application.

Below are snapshots from the actual development process, showing the prompt architecture being built module by module.

I shipped a fully functional prototype that demonstrates the concept. The system architecture is production-ready and extensible.

A creator who would spend 6+ hours writing individual scene prompts, managing character consistency, and optimizing for platform now receives a complete, production-ready scenario in minutes.

This project is not a "how I'd build this if I used AI today" case study. I actively used AI throughout, and it fundamentally shaped the system.

Tested four AI environments (ChatGPT, Claude, Codex, AntiGravity) head-to-head across scenario generation. This comparison became the research foundation for the entire product.

Treated Gemini API capabilities as design constraints. The 7-module architecture was shaped by what AI could reliably output. The Master Scenario Prompt is itself a design artifact — engineering how AI thinks about video.

Gemini API generates structured JSON outputs: Project DNA, Character DNA, Scene blueprints, optimization suggestions. The API handles narrative synthesis; the UI presents it clearly.

This project demonstrates my approach to designing with and for AI: rigorous research, system thinking, and outcomes that matter.

Let's Talk About Your Project